SAN MATEO, CA — February 4, 2026: Waste sorting has been a staple robotics challenge for years because it forces vision, planning, and motion to work as one. A new build on Hackster pairs YOLO11 with the ROS 2-based ArmPi Ultra, delivering real-time detection on a Raspberry Pi 5 and smooth pick-and-place cycles that don’t stall between frames.

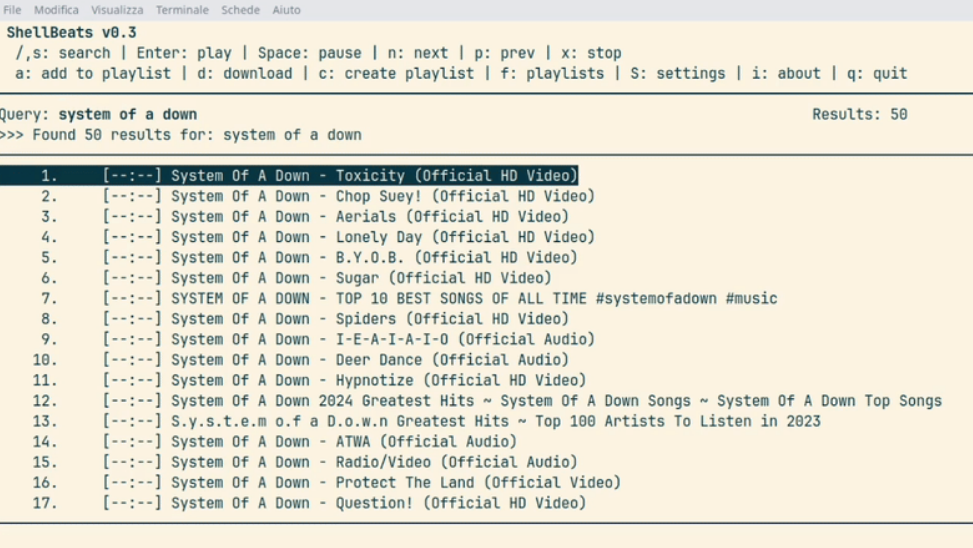

Why YOLO11 on the edge

- YOLO11’s optimized backbone delivers millisecond-level inference while maintaining solid mAP, a practical fit for single-board computers.

- Running YOLO11n (nano) on a Raspberry Pi 5 keeps latency low enough that the arm doesn’t “pause and think” before each grasp—sorting stays continuous.

- Transfer learning lets you fine-tune on specific waste classes (recyclables, organics, hazardous) without training from scratch.

Hardware + software stack

- ArmPi Ultra robotic arm with a 3D depth camera for spatial perception.

- Raspberry Pi 5 handling YOLO11n inference and ROS 2 nodes.

- Clean ROS 2 APIs to bridge detection, planning, and actuation.

- Inverse Kinematics (IK) to convert camera coordinates into stable, repeatable grips.

Data-to-deployment workflow

- Capture: Use the onboard depth camera to collect images and depth, so labels carry real-world geometry.

- Train: Fine-tune a pre-trained YOLO11 model on your labeled waste set; iterate on class balance and augmentations.

- Deploy: Push the model through a ROS 2 interface; a single call kicks off detect → classify → pick/place.

From pixels to picks

- Depth-aware detections localize objects in 3D, reducing misses on crumpled cans vs. reflective plastics.

- The IK solver translates target poses into joint angles, prioritizing approach vectors that keep grips stable.

- Result: Fluid, back-to-back cycles without jittery starts and stops.

Natural-language control, embodied tasks

Beyond color rules, the system can route tasks via a multimodal LLM: “Clear the table, but keep recyclables on the left.” The LLM parses intent, sequences steps, and the arm executes—categorizing by environmental rules and sorting accordingly. It’s a useful bridge between rigid scripts and task-level autonomy.

Build paths and extensions

- Follow the ArmPi Ultra tutorials to get the base sorter running quickly.

- Add a Mecanum chassis for a mobile pickup bot, or a mic + voice node for hands-free control.

- Tinker with motion planning: test alternative IK solvers, grasp strategies, or collision layers in ROS 2.

- Iterate on datasets—depth-informed labels are worth the extra effort for cluttered scenes.

- Project page: YOLO11 x ArmPi Ultra on Hackster

The Editor’s Take: This build hits the sweet spot for DIYers: a readable ROS 2 pipeline, edge-ready YOLO11n, and a depth-first dataset loop you can actually maintain. It’s a solid reference for anyone moving from demo scripts to dependable, workshop-grade embodied robots—easy to extend, hard to outgrow.

Credit and Source: Hackster.io